Simple Illustration of Programmable Backpropagation

Backpropagation Derivation

Backpropagation is always one of my knowledge weaknesses in machine learning. I have understood it many times. However, I always forgot how it really works but simply remember it is basically about the multivariable chain rules. I clearly remember that Andrew Ng once joked he sometimes cannot remember how backpropagation works so he often had to understand backpropagation again before he gave lectures in machine learning courses. Even if I refresh my mind by reading some related materials, I never understood why such tedious and complicated calculus could be programmable in our machine learning tools when we are working on neural networks.

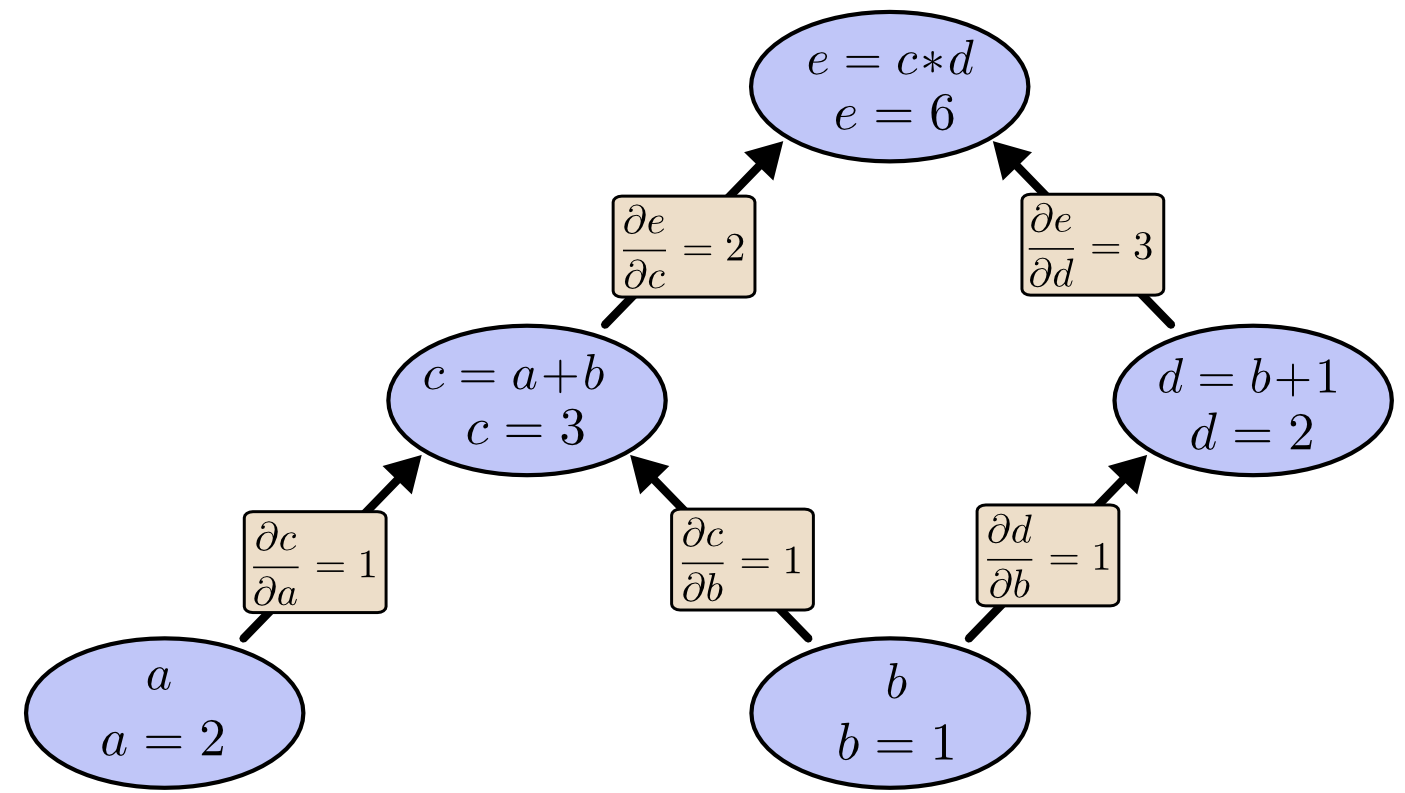

If you know how to calculate \(\frac{\partial{e}}{\partial{b}}\) in the following figure, you basically know how to do backpropagation.

Here is a very simple and good illustration of the backpropagation. However, these materials are often over-simplified. The network they provided is not even the ordinary neural network we are using nowadays. Not even mention including the activation functions.

Here, I neatly presented the workflow of backpropagation so that people could easily figure out the programmable logic inside the derivations. It is extremely tedious to type equations in MathJax. So I finally chose to use Word and transformed the file to pdf for you guys to download.

You can download my simple illustration of programmable backpropagation here.

Backpropagation was always like a black box when I was working on machine learning tasks. I hope these materials could always remind me the mechanism of backpropagation and the importance of mathematics in computer science.

Backpropagation Implementation

Here is a code example of the neural network backpropagation. In case the author makes changes or removes the contents, you may also download it from my site. The author also wrote blogs (page 1, page 2) on the implementation of this backpropagation to solve classification problems.

Although it might twist your brain, the author’s implementation has exactly the same logic to mine (He specifically used sigmoid function as activation function and least sum-of-squares function as loss function). The implementation was very neat, which only used Numpy. I think it would take me a very long time if I am going to write it myself.

The key codes of backpropagation are as follows:

1 | def update_mini_batch(self, mini_batch, eta): |

His weights and biases matrices were organized in this way by the way.

1 | class Network(object): |

I remember that when I was taking GRE test years ago, there is a topic of “human beings become stupid as the technology develops”. I might agree with it to some extent, because using too many tools when we are coding or doing numerical analysis makes us stupid.

I have seen many deep learning codes which only use Numpy, and I really admire them very much. We are actually over-using the autograds functions in modern tools, such as Tensorflow. Finally, the learning algorithm we developed becomes a black box, which is pretty bad. There are other reasons, in addition to autograds, why we are using such modern tools.

We have to keep in mind that mathematics always comes first even if we are computer scientists.

Simple Illustration of Programmable Backpropagation